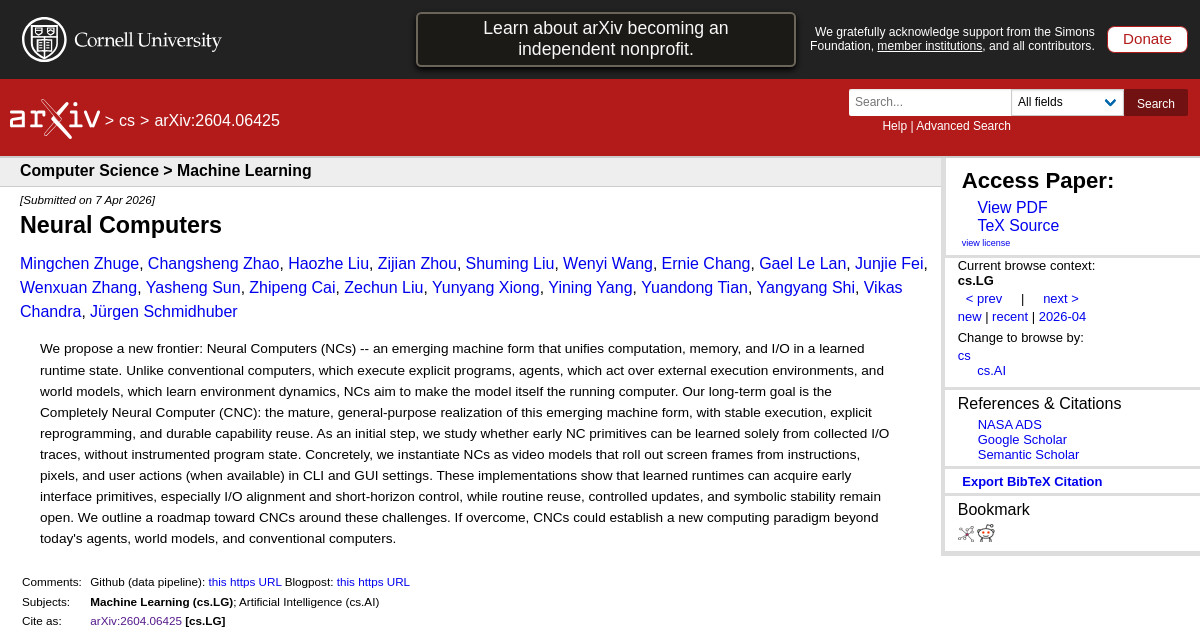

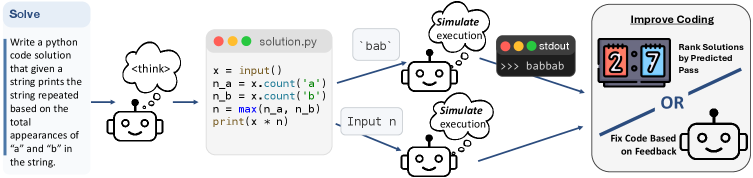

FrontierSmith: Synthesizing Open-Ended Coding Problems at Scale

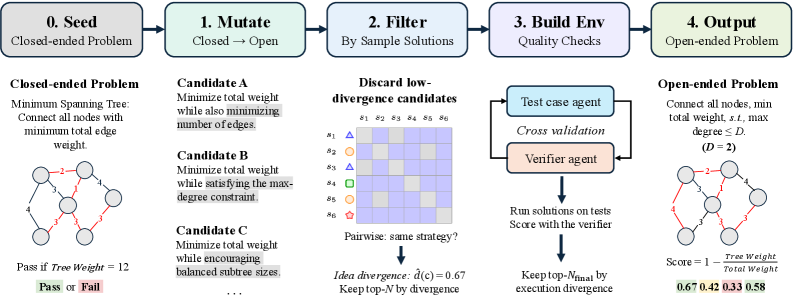

This addresses a critical bottleneck in training better coding agents—the scarcity of open-ended programming problems that mirror real-world development challenges. FrontierSmith automatically evolves competitive programming problems into open-ended variants that elicit diverse solution approaches. Essential for understanding how to improve AI coding capabilities beyond the current focus on well-defined tasks like bug fixes and feature implementation.

Takeaways

- Open-ended coding problems are essential for training LLMs that can handle real-world development challenges.

- Automated synthesis can scale creation of diverse coding problems that elicit genuinely different solution approaches.

- Current LLM coding training focuses too heavily on well-defined tasks versus the ambiguous problems developers actually face.