Many-Shot CoT-ICL: Making In-Context Learning Truly Learn

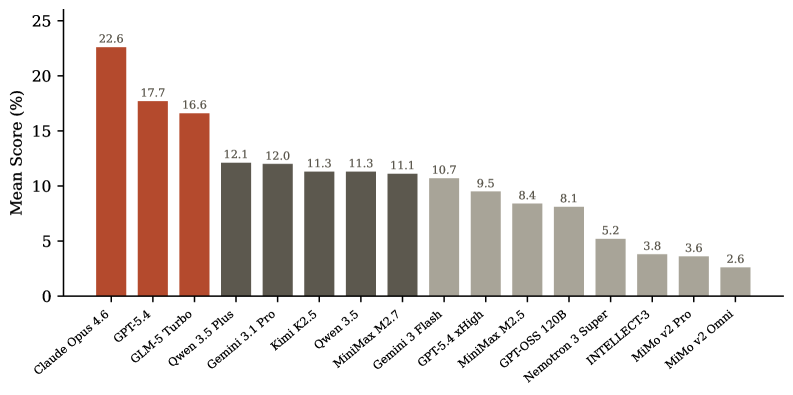

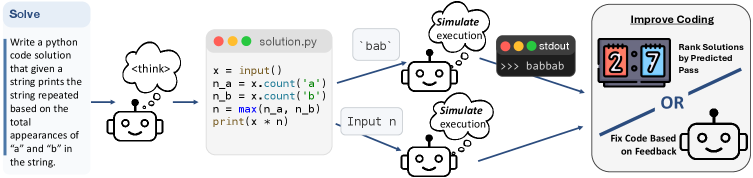

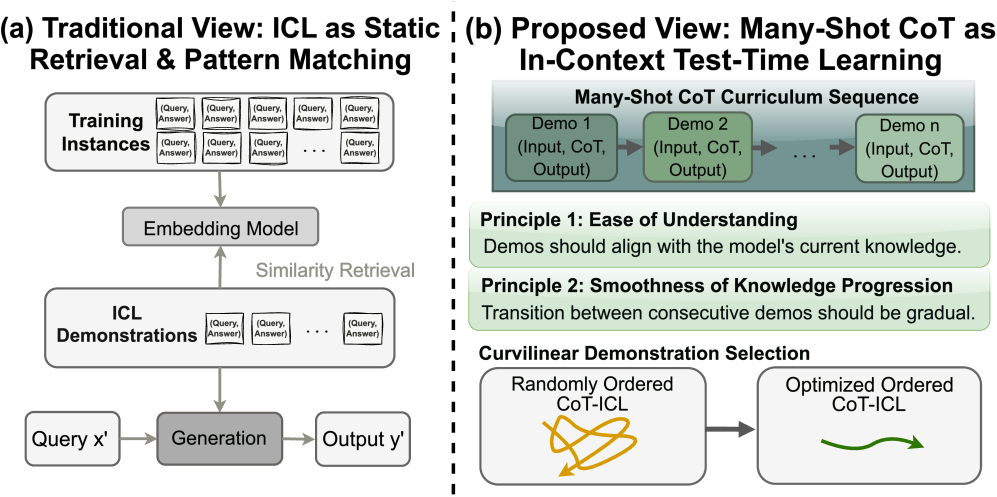

This overturns conventional wisdom about many-shot in-context learning for reasoning tasks. While more examples help with simple tasks, reasoning tasks show unstable scaling behavior, and semantic similarity-based retrieval actually hurts performance. The order of examples matters more than previously thought. This has immediate implications for how you structure prompts and manage context in reasoning-heavy production systems.

Takeaways

- Many-shot scaling rules for non-reasoning tasks don't apply to reasoning tasks and can degrade performance.

- Semantic similarity poorly predicts procedural compatibility in chain-of-thought reasoning.

- Example ordering significantly impacts performance and requires careful consideration in production prompt design.