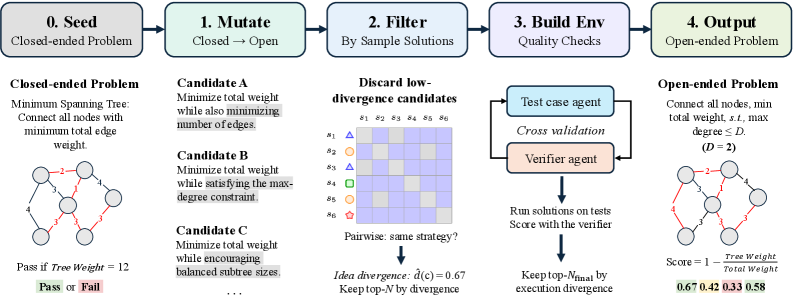

Harness engineering: leveraging Codex in an agent-first world

Essential reading for anyone building agent-first development workflows. Lopopolo shares practical insights from Codex implementation that challenge conventional wisdom about how AI should integrate into software engineering processes. This isn't another theoretical piece—it's a practitioner's guide to harnessing AI agents in real development environments where traditional tooling falls short.

Takeaways

- Agent-first workflows require fundamentally different architectural thinking than traditional AI-assisted development.

- Codex integration succeeds when it becomes the primary interface rather than a secondary tool.

- Production agent systems need careful harness engineering to bridge the gap between AI capabilities and developer workflows.