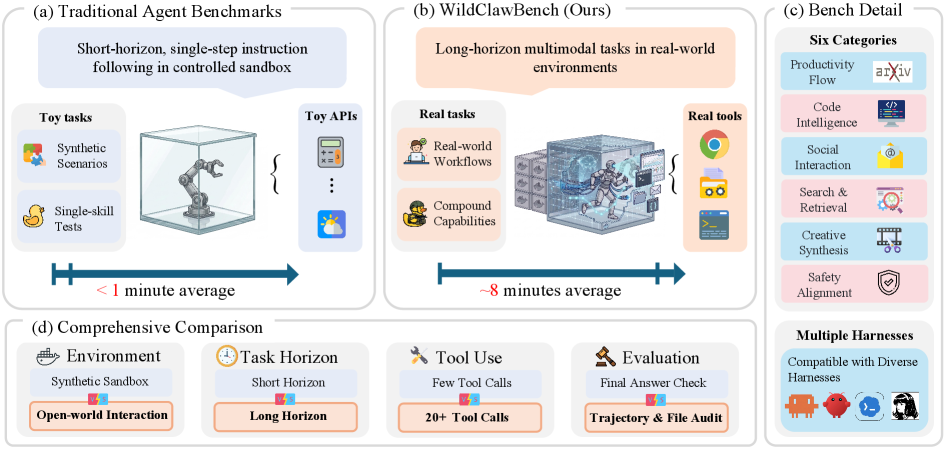

WildClawBench: A Benchmark for Real-World, Long-Horizon Agent Evaluation

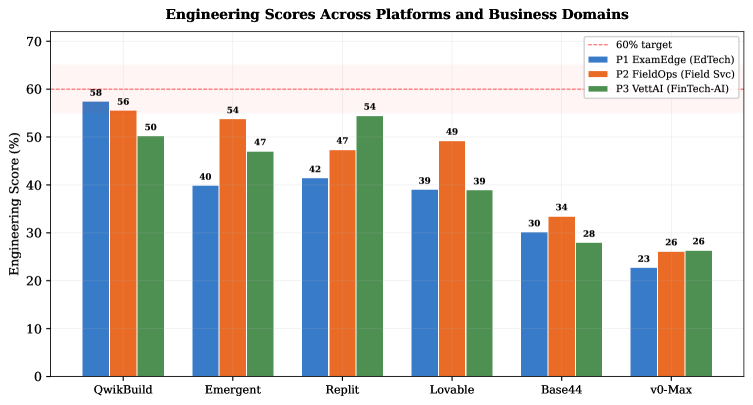

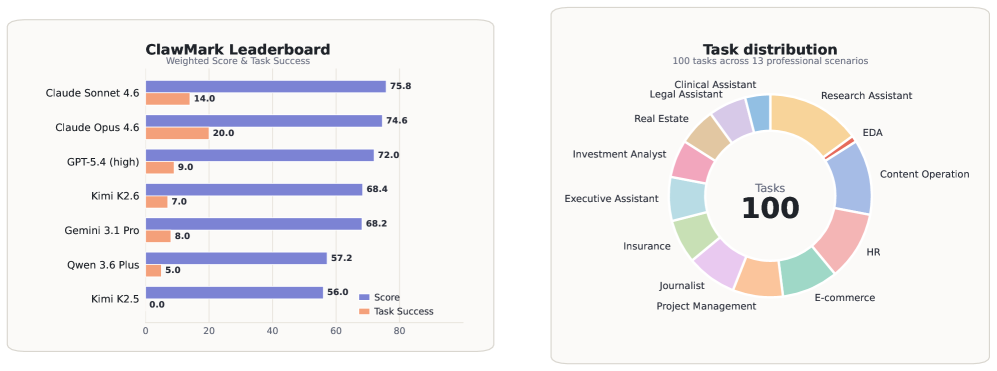

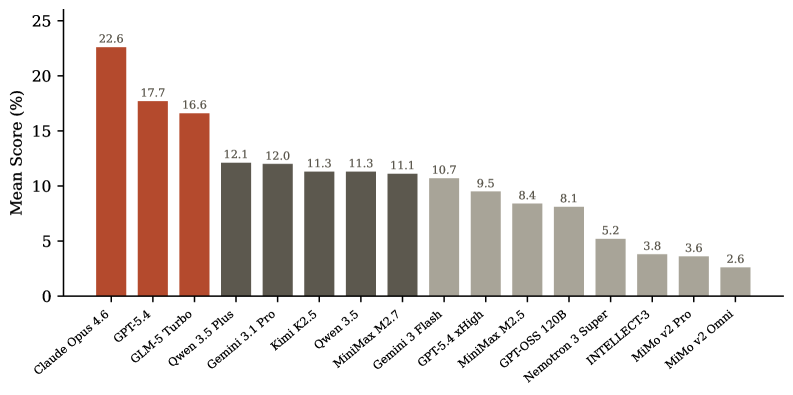

This benchmark exposes the embarrassing gap between synthetic agent evaluations and real-world performance. While most benchmarks use mock APIs and toy tasks, WildClawBench runs agents in actual CLI environments with real tools for 8+ minute tasks. The results are sobering—even frontier models like Claude Opus achieve only 35% success rates. If you're building production agents, this benchmark reveals what you're actually up against.

Takeaways

- Synthetic benchmarks dramatically overestimate real-world agent performance in production environments.

- Long-horizon tasks in native runtimes reveal fundamental limitations even in frontier models.

- Production agent deployment requires significantly different evaluation criteria than academic benchmarks suggest.