HAGE: Harnessing Agentic Memory via RL-Driven Weighted Graph Evolution

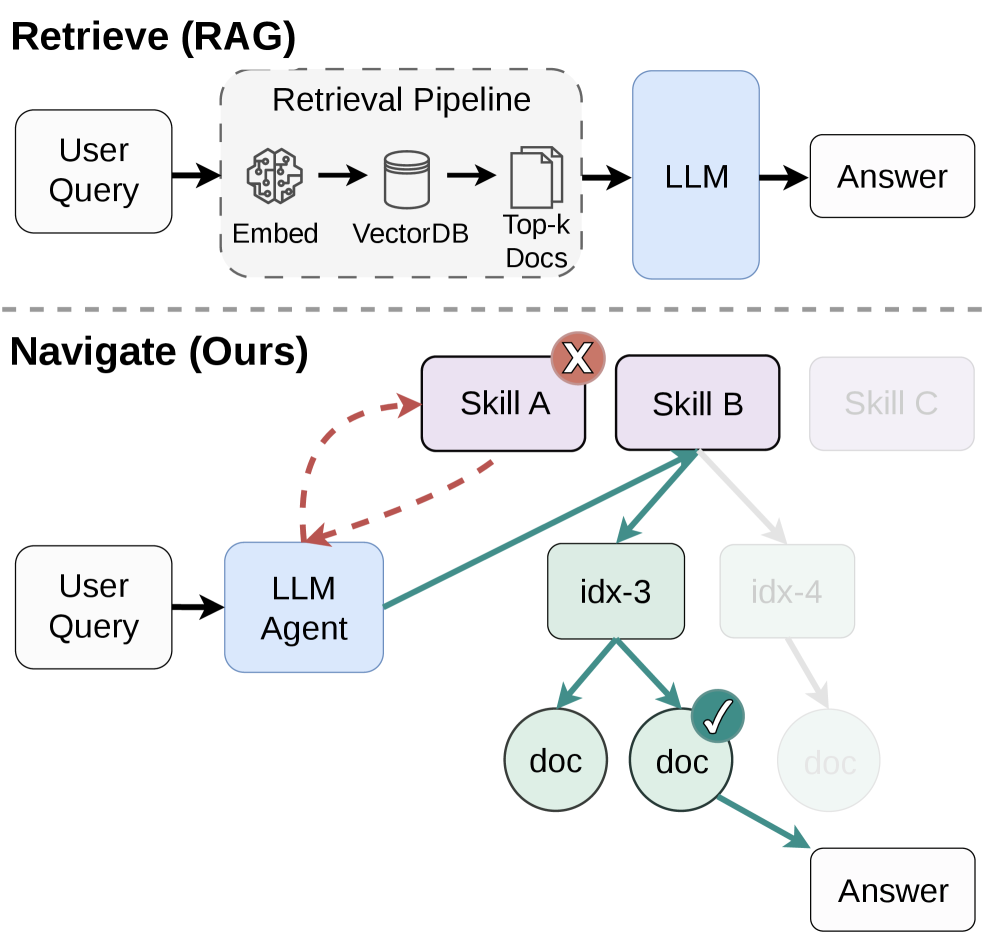

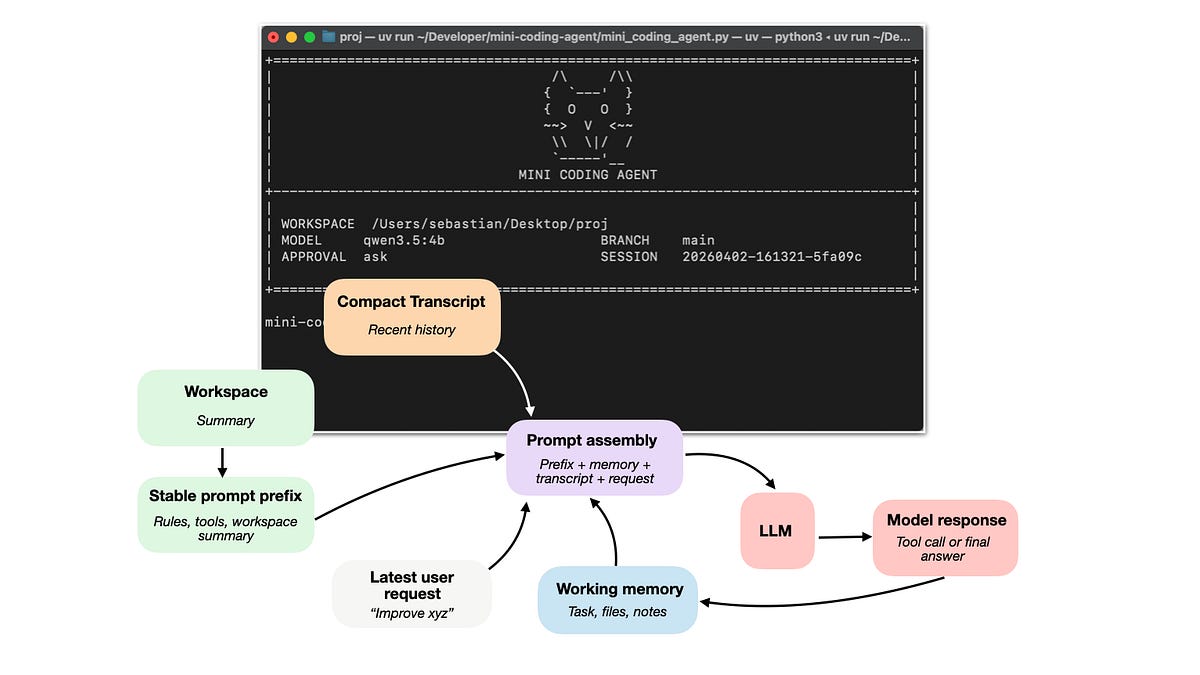

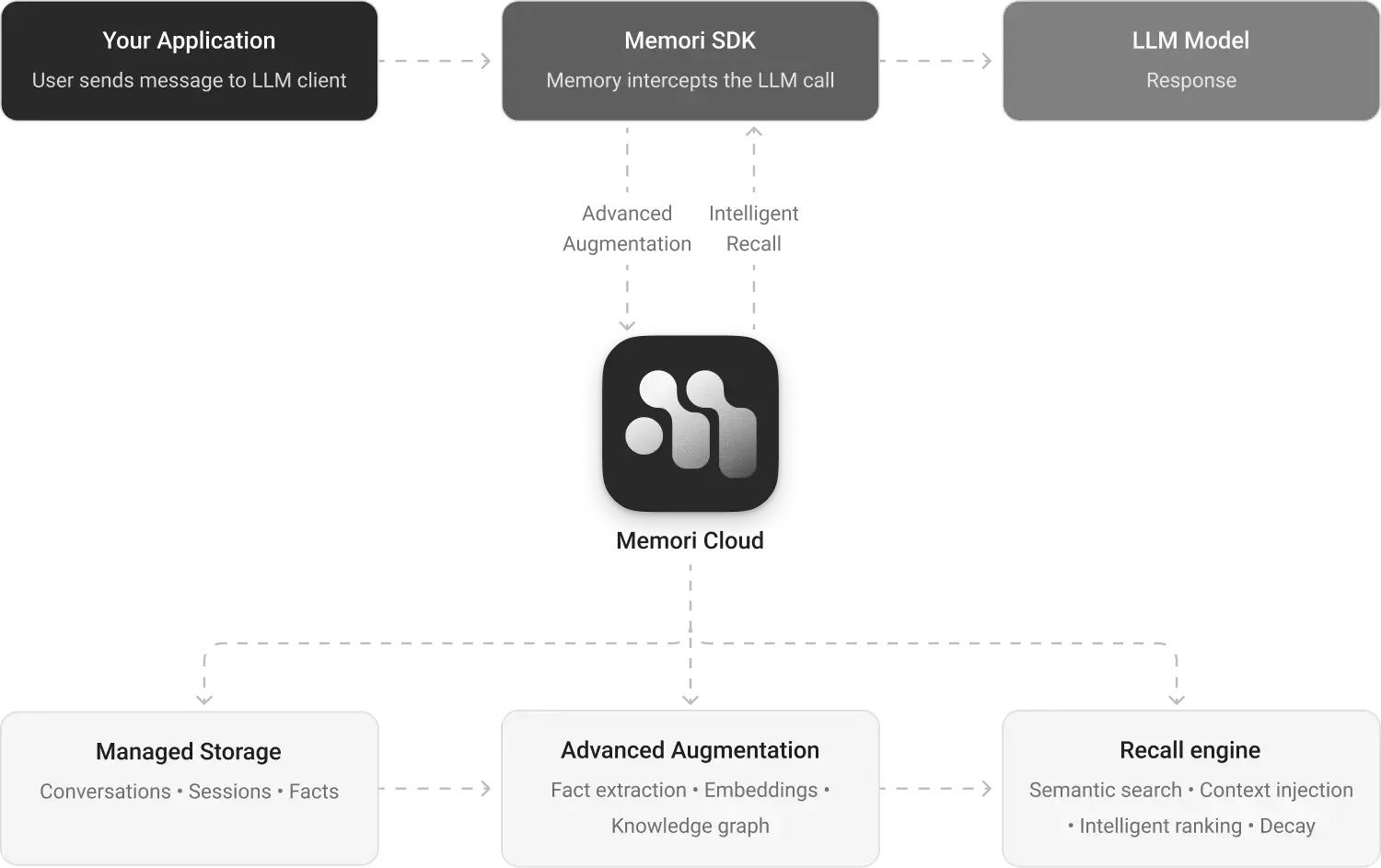

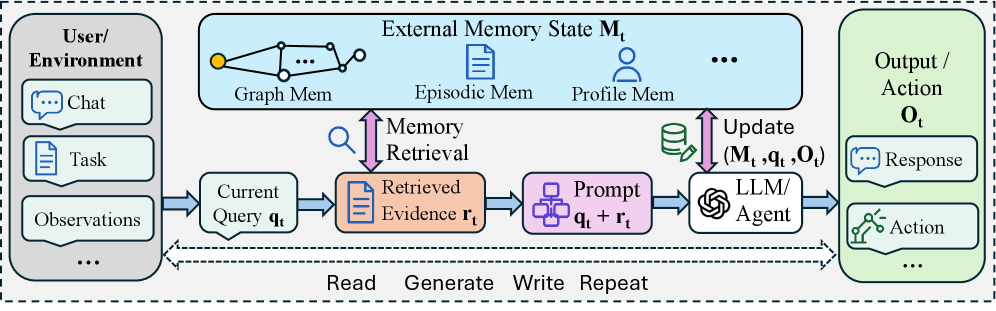

Finally, a serious approach to agent memory that goes beyond naive vector search. HAGE reconceptualizes memory retrieval as query-conditioned graph traversal, where relationships have varying strength and confidence. This matters because most production agent systems still rely on flat retrieval that ignores the complex, context-dependent nature of how information should be connected and weighted. If you're building stateful agents, this provides a blueprint for sophisticated memory architectures.

Takeaways

- Agent memory should be organized as weighted multi-relational graphs rather than flat vector stores.

- Query-conditioned traversal enables more sophisticated retrieval than static similarity search.

- Trainable relation features allow memory systems to adapt to different types of queries and contexts.